I'm still working on my large SauceDB project, but during a meeting at work earlier this week my coworkers and I came up with a simple project that may be a nicer introduction to working with Bluemix and Ionic. What follows is a complete application (both back and front end) that is also somewhat simple. There's multiple moving parts here so it does require some setup, but I think this guide would be a good introduction for developers. Of course, the entire thing is also up on GitHub (https://github.com/cfjedimaster/IonicBluemixDemo) with the instructions mirrored there as well. Alright, let's get started!

What are we building?

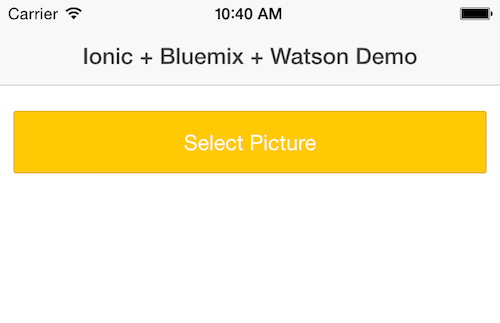

Before we get to the code, what are we actually building? We're building an application that makes use of the Watson Visual Recognition service. We'll create a mobile application that lets you select a picture and send it to the Watson service so it can try and find what's in the picture. If this sounds familiar, it should. I blogged about this back in February. However, back then I built a simple Cordova-only demo with the service credentials hard coded into the code. That was bad. This version is "proper" with a Node.js server running as a proxy to Watson on Bluemix. Here's a screen show of the mobile app on start:

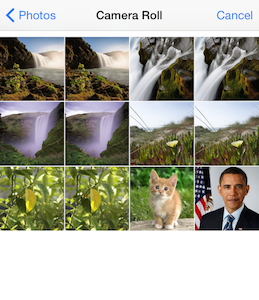

Clicking the button brings up a prompt to select an image. Note - it would be trivial to make this use a real camera - but by using the photo gallery it is easier to run on a simulator. And obviously you could use two buttons so the user could choose.

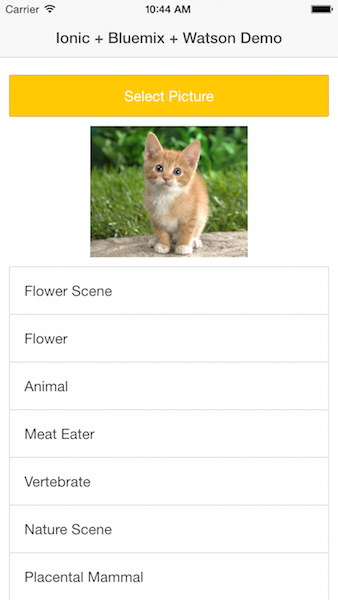

After you select the image, it will be uploaded to the Node.js server, sent to Watson for processing (I imagine Watson as millions of tiny minions), and the results returned to the mobile app. Watson includes both labels for things it believe it found as well as scores, but for this app, we'll just display the labels.

Prereqs

In order to build this project, there's a few things you'll need to get started.

- Apache Cordova should be installed, and at least one of the mobile SDKs. I tested with iOS, but this should work fine in Android and other platforms as well. In theory, you could try the Ionic View application, but there is one part that I'm fairly certain will not work well. I'm going to test that a bit later.

- Ionic.

- A Bluemix account. Remember, this is 100% free. Yes you will be asked for a credit card after 30 days, but even then you can run Bluemix, and every service on there, at a free tier appropriate for testing. I think our verbiage is a bit unclear on this, but you can run it for free. Free. Did I say it was free? Yes, free.

- Node.js installed so you can test locally.

Set up

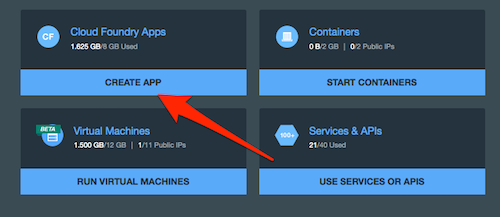

Let's begin by creating the application on Bluemix. Assuming you've logged in, begin by clicking Create App under Cloud Foundry Apps.

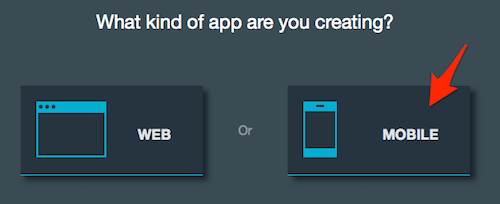

Then select Mobile for the type of app you are creating. To be clear, this will only set some default services. You can, and we will in this project, also create a web site via your Node.js application.

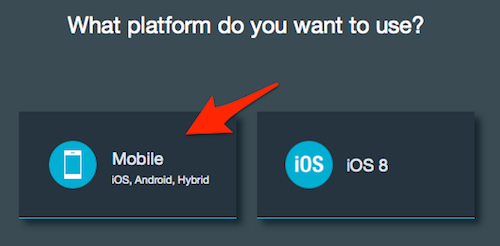

Now select the Mobile option that supports hybrid. To be clear, even though you aren't picking iOS 8, you can still deploy to iOS 8. All we're doing is driving what's automatically added to our application in Bluemix.

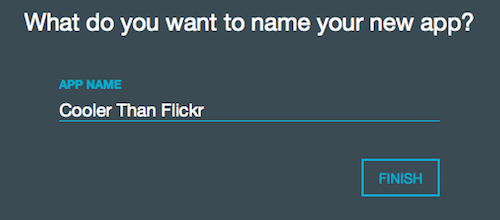

Click Continue and then give this bad boy a name. I like to name my applications optimistically:

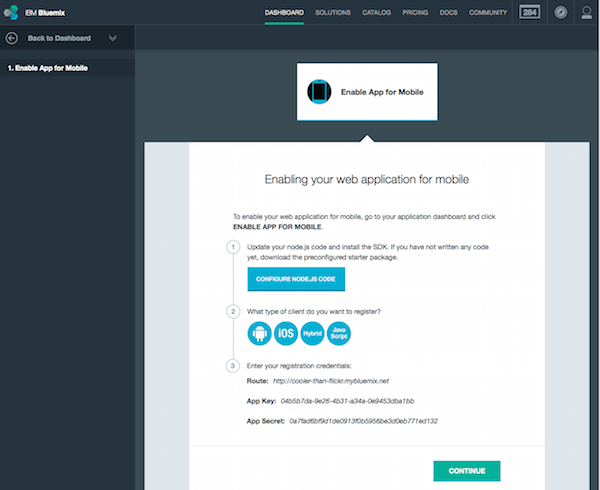

Click Finish and let Bluemix set stuff up for you. When done, you'll get a confirmation screen with some tips for where to go next.

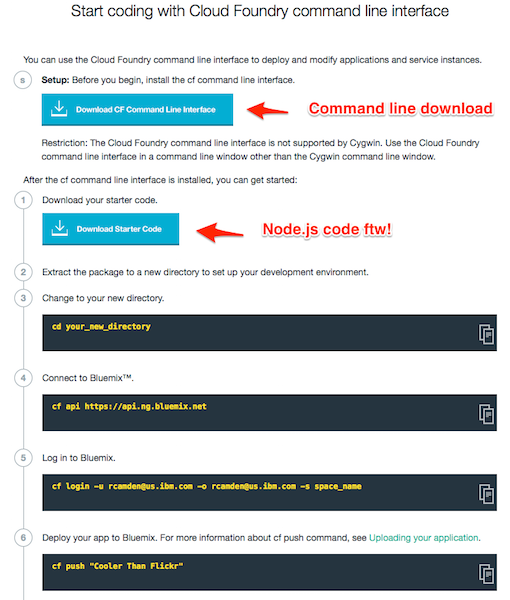

Just hit continue, and then select the Start Coding link in the left hand nav. This next page has a few important links on it:

That first item, "Download CF Command Line Interface", is a one time download to get the command line tool. The command line tool, cf, lets you push up your code to the Bluemix server. You'll do this when you want to deploy the app live to the Internet. For our project here you won't ever need to do that, but can if you want to show your app to others.

The second item, "Download Start Code", gives you the Node.js code to start your server. Normally you could download this to get started on a new application. But our project exists up on GitHub already. Before diving into the code, let's go ahead and set up the service our application will load. Click "Overview" to return to the main application home page, and then "Add a Service or API".

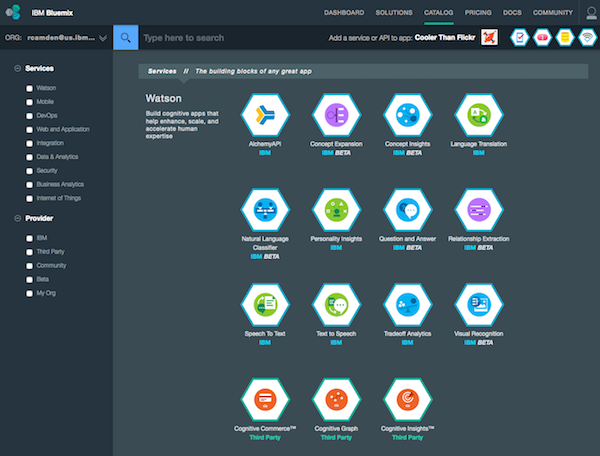

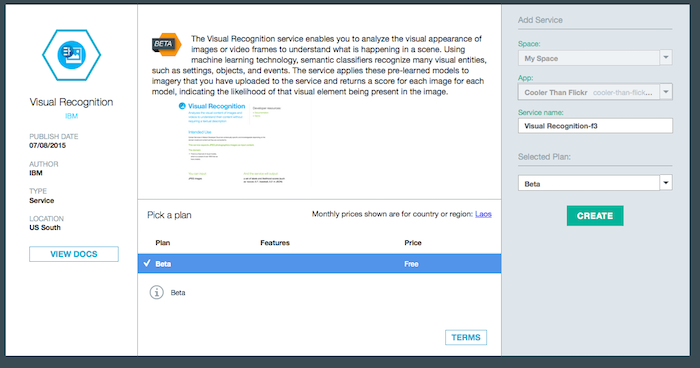

Bluemix offers quite a few services, and while I can see "Visual Recognition" there clearly, you may not. You can use the search field on top to quickly narrow down your search. When you click on the Visual Recognition service it will give you a confirmation of the price (free, well, beta, but free!) and where the service will be installed. For now you can accept the defaults.

Recap

Ok, just to recap. We create a new application in Bluemix and added one new service to it, Watson Visual Recognition. Now it's time to crack the code!

The Server

At the command line, check out the repo: https://github.com/cfjedimaster/IonicBluemixDemo

This will give you two folders: server and mobile. The server folder is where the Node.js code will run and the mobile folder is where the Cordova/Ionic code will run. We'll worry about the mobile side in a second. For now, go into the server folder via your Terminal and type:

npm install

This will install the necessary dependencies the application needs. Now, let's open the core file of the application, app.js.

var express = require('express'),

app = express(),

ibmbluemix = require('ibmbluemix'),

config = {

// change to real application route assigned for your application

applicationRoute : "put your route here",

// change to real application ID generated by Bluemix for your application

applicationId : "put your id here..."

};

var watson = require('watson-developer-cloud');

var fs = require('fs');

var formidable = require('formidable');

/* This could be read from environment variables on Bluemix */

var visual_recognition = watson.visual_recognition({

username: 'get this from the BM services panel for Visual Recog',

password: 'ditto',

version: 'v1'

});

// init core sdk

ibmbluemix.initialize(config);

var logger = ibmbluemix.getLogger();

//redirect to cloudcode doc page when accessing the root context

app.get('/', function(req, res){

res.sendfile('public/index.html');

});

app.get('/desktop', function(req, res){

res.sendfile('public/desktop.html');

});

app.post('/uploadpic', function(req, result) {

console.log('uploadpic');

var form = new formidable.IncomingForm();

form.keepExtensions = true;

form.parse(req, function(err, fields, files) {

var params = {

image_file: fs.createReadStream(files.image.path)

};

visual_recognition.recognize(params, function(err, res) {

if (err)

console.log(err);

else {

var results = [];

for(var i=0;i<res.images[0].labels.length;i++) {

results.push(res.images[0].labels[i].label_name);

}

console.log('got '+results.length+' labels from good ole watson');

/* simple toggle for desktop/mobile mode */

if(!fields.mode) {

result.send(results);

} else {

result.send("<h2>Results from Watson</h2>"+results.join(', '));

}

}

});

});

});

// init service sdks

app.use(function(req, res, next) {

req.logger = logger;

next();

});

// init basics for an express app

app.use(require('./lib/setup'));

var ibmconfig = ibmbluemix.getConfig();

logger.info('mbaas context root: '+ibmconfig.getContextRoot());

// "Require" modules and files containing endpoints and apply the routes to our application

app.use(ibmconfig.getContextRoot(), require('./lib/staticfile'));

app.listen(ibmconfig.getPort());

logger.info('Server started at port: '+ibmconfig.getPort());Ok, let's break this down. The first thing you'll notice is a config block:

config = {

// change to real application route assigned for your application

applicationRoute : "put your route here",

// change to real application ID generated by Bluemix for your application

applicationId : "put your id here..."

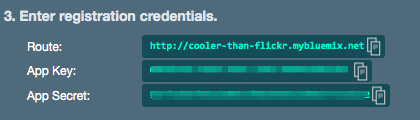

};You need to change these values to the ones specified in the Bluemix console. If you go back to that web page and click the Mobile Options link, you'll see the values there:

In the screen shot above, the app key value is the applicationId value in code.

Now let's look at this portion:

var watson = require('watson-developer-cloud');

// ... stuff

var visual_recognition = watson.visual_recognition({

username: 'get this from the BM services panel for Visual Recog',

password: 'ditto',

version: 'v1'

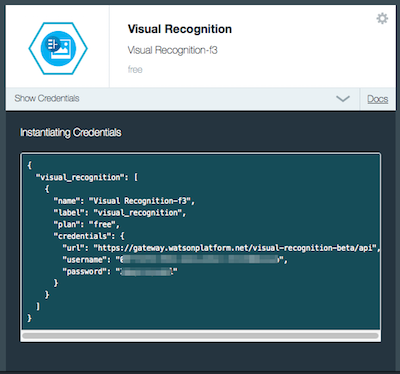

});So first off, we've added a library called watson-developer-cloud to our application. This provides simple access to various Watson services including the visual recognition one. In order to use the service you need to configure access by supplying the username and password. You can find it by clicking the "Show Credentials" link for the service.

I want to point out something kinda important here. When your app runs in the Bluemix environment, you have access to environment variables for everything, including services and their authentication information. A better approach here would be for my code to sniff for those variable and use hard coded values when they aren't available. For now though we're keeping it simple. This will let us run the code locally and on Bluemix. Let's carry on through the code. (Note - I'm skipping over some code from the boilerplate that isn't necessarily important. If there is something you want to ask me about, just use the comments below.)

app.get('/desktop', function(req, res){

res.sendfile('public/desktop.html');

});This block will be used to give us a simple web based version of our service. It's going to point to the same API our mobile application will use. By creating this HTML version we end up with a simple (and fast) way to test the functionality of the application before moving to the device.

app.post('/uploadpic', function(req, result) {

console.log('uploadpic');

var form = new formidable.IncomingForm();

form.keepExtensions = true;

form.parse(req, function(err, fields, files) {

var params = {

image_file: fs.createReadStream(files.image.path)

};

visual_recognition.recognize(params, function(err, res) {

if (err)

console.log(err);

else {

var results = [];

for(var i=0;i<res.images[0].labels.length;i++) {

results.push(res.images[0].labels[i].label_name);

}

console.log('got '+results.length+' labels from good ole watson');

/* simple toggle for desktop/mobile mode */

if(!fields.mode) {

result.send(results);

} else {

result.send("<h2>Results from Watson</h2>"+results.join(', '));

}

}

});

});

});Ok, so this is the main API that listens for images. To process the form I'm using a Node package called Formidable. This is a super simple package that makes working with file uploads very easy in Node. I create an instance of a form using their API and then ask it to keep extensions. Why? By default Formidable is going to store the file in the operating system's temporary directory. It saves the file with a unique name that has no extension. This file is a valid copy of the binary data you sent to it, but if you try to send it to Watson, the service can't handle the lack of an extension. So I simply tell Formidable to keep the same extension I used when uploading.

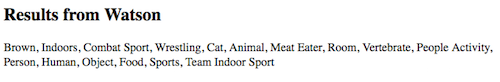

So - finally - we can use the Visual Recognition service to check the file. It is a simple matter of specifying the file (we get that from Formidable) and then pass it to Watson. The result is a complex object including both labels ans scores, but I copy out just the labels to make it easy.

This final portion:

/* simple toggle for desktop/mobile mode */

if(!fields.mode) {

result.send(results);

} else {

result.send("<h2>Results from Watson</h2>"+results.join(', '));

}is a somewhat lame way of handling mobile vs desktop testing. A "proper" API would check the headers of the requester to see if it wanted HTML versus JSON and respond accordingly. Since I'm just testing, I use a form field flag to handle this instead.

And that's it. The desktop form is just that - a simple form (you can see all the code here), but let's take a look at what this renders in the browser.

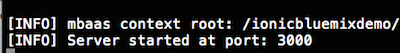

At the command line, fire up the server by typing node app then open your browser to the port mentioned in the last line of your terminal:

Given that your port is probably 3000, open your browser to localhost:3000/desktop:

Select an image and then submit the form. We don't have any validation on the upload for now so be sure to select a valid image. When done, you'll get a result.

Here's the source image:

And here is the result:

Ok, time to turn to the mobile device!

The Mobile Client

As mentioned above, the front end is going to be built using Apache Cordova and Ionic. If you check out the Git repo for the project you've got the code already. You will want to create a new Ionic project using the www folder from the Git project as the source. At the time I write this post, the Ionic CLI doesn't make it clear (I filed a bug report and it looks to be fixed already) that you can create a new Ionic project based on a local folder. At your terminal, you can do this:

ionic start mymobileapp ./mobile/www

This assumes you are in the same directory as the Git checkout and you want to call your new folder, mymobileapp. You can call it whatever you want obviously.

You'll want to add your desired platform (for example, ionic platform add ios) and then add the following plugins:

- cordova-plugin-camera

- cordova-plugin-file-transfer

You can also use ionic state restore to load plugins from the package.json file.

In theory, you'll be able to test the app right away, but let's take a quick look at the code. First, the index.html page.

<!DOCTYPE html>

<html>

<head>

<meta charset="utf-8">

<meta name="viewport" content="initial-scale=1, maximum-scale=1, user-scalable=no, width=device-width">

<title></title>

<link href="lib/ionic/css/ionic.css" rel="stylesheet">

<link href="css/style.css" rel="stylesheet">

<script src="lib/ionic/js/ionic.bundle.js"></script>

<script src="cordova.js"></script>

<!-- your app's js -->

<script src="js/app.js"></script>

</head>

<body ng-app="starter">

<ion-pane ng-controller="MainCtrl">

<ion-header-bar class="bar-stable">

<h1 class="title">Ionic + Bluemix + Watson Demo</h1>

</ion-header-bar>

<ion-content class="padding">

<button class="button button-energized button-block" ng-click="selectPicture()" ng-disabled="!cordovaReady">Select Picture</button>

<p>

<img ng-src="{{pic}}" class="selPicture">

</p>

<ion-list class="list-inset">

<ion-item ng-repeat="result in results">{{result}}</ion-item>

</ion-list>

</ion-content>

</ion-pane>

</body>

</html>The good stuff starts inside the <body> so let's focus there. The app has a grand total of one screen so we aren't using the fancy State router or views even - we just have one view right inside the index.html file. On top is the button that we'll use to select an image. We then have a blank image that will render the one you selected. Finally, I use a simple <ion-list> to render the results from Watson. Now let's look at the JavaScript.

// Ionic Starter App

// angular.module is a global place for creating, registering and retrieving Angular modules

// 'starter' is the name of this angular module example (also set in a <body> attribute in index.html)

// the 2nd parameter is an array of 'requires'

angular.module('starter', ['ionic'])

.controller('MainCtrl', function($scope,$ionicPlatform,$ionicLoading) {

$scope.results = [];

$scope.cordovaReady = false;

$ionicPlatform.ready(function() {

$scope.$apply(function() {

$scope.cordovaReady = true;

});

});

$scope.selectPicture = function() {

var gotPic = function(fileUri) {

$scope.pic = fileUri;

$scope.results = [];

$ionicLoading.show({template:'Sending to Watson...'});

//So now we upload it

var options = new FileUploadOptions();

options.fileKey="image";

options.fileName=fileUri.split('/').pop();

var ft = new FileTransfer();

ft.upload(fileUri, "http://localhost:3000/uploadpic", function(r) {

//async call to Node, which calls Watson, which gives us an array of crap

$scope.$apply(function() {

$scope.results = JSON.parse(r.response);

});

$ionicLoading.hide();

}, function(err) {

console.log('err from node', err);

}, options);

};

var camErr = function(e) {

console.log("Error", e);

}

navigator.camera.getPicture(gotPic, camErr, {

sourceType:Camera.PictureSourceType.PHOTOLIBRARY,

destinationType:Camera.DestinationType.FILE_URI

});

};

})

.run(function($ionicPlatform) {

$ionicPlatform.ready(function() {

// Hide the accessory bar by default (remove this to show the accessory bar above the keyboard

// for form inputs)

if(window.cordova && window.cordova.plugins.Keyboard) {

cordova.plugins.Keyboard.hideKeyboardAccessoryBar(true);

}

if(window.StatusBar) {

StatusBar.styleDefault();

}

});

})Alright - let's look at this. The core logic begins with the selectPicture function. As I mentioned, we're only using the photo library, but you could switch to the camera or use both if you would like. When the camera has a selected picture, then the fun begins. We use an instance of the FileTransfer object to send the image to our server. Make a note of this line: ft.upload(fileUri, "http://localhost:3000/uploadpic", function(r) {. This URL assumes you are testing in the simulator on your computer. You need to change this to either a real IP of your machine if testing on a device or the address of your Bluemix server. And that's it. Node.js and Watson handle the crunching. We get back an array of results we can then just add to the scope.

Wrap Up

I hope that you have found this a simple, if not necessarily tiny, example of using IBM Bluemix, Node.js, Apache Cordova, and Ionic in a real application. Remember that you can get all of the code here (https://github.com/cfjedimaster/IonicBluemixDemo). I'll be updating the readme of the repo tomorrow to be a bit more verbose. If you have any questions, comments, or suggests, just leave me a note below!

Archived Comments

Hi Ray great article on an end to end sample. I will be referring back to this for a while.

Let me know what you find out about Ionic View App.

By the way this Bluemix thing free? :-)

If I switch to Base64 for the camera, I wonder if the FileTransfer will still work. Obviously Bse64 isn't nice for real apps, but if it worked, it would be ok for a Lab/Demo session which is what this was built for.

Very cool post! Ran this on iPhone 6, 8.4, and it seems the yellow Select Picture button is disabled. Are there any permissions that need to be enabled. Also happens with emulator.

It should become enabled when deviceready fires. Try debugging remotely w/ Safari and let me know what you see.

Seems like this was causing me problems:

if(window.cordova && window.cordova.plugins.Keyboard) {

cordova.plugins.Keyboard.hideKeyboardAccessoryBar(true);

}

Add the keyboard plugin:

cordova plugin add com.ionic.keyboard

I kept stumbling because I didn't install the three plugins. Once they were added, everything worked great. So many neat things to try from this post. Thanks!

Ok, so I was a bit worried about the setup of the new project. Did you follow the directions exactly in terms of making a new Ionic project and pointing it at www?

I might have glossed over the plugins part. ;-) I think I added the ios platform and then moved on, not seeing a command to install the plugins. Does ionic state restore do this? Clarification might help.

Yes, I think that would have worked here.

Many thanks for this guide. It's hard to know where to start without practical guides like this.

For those that want to get it working hosted by BlueMix rather than by localhost:

1. In directory 'server' modify manifest.yml and change IonicBluemixDemo to whatever you called your Blue Mix App (e.g. I called mine camdentest-bmw):

applications:

- services:

- camdentest-bmw-MAS

- camdentest-bmw-Push

- camdentest-bmw-MobileData

- camdentest-bmw-Mobile Quality Assurance

disk_quota: 1024M

host: camdentest-bmw

name: camdentest-bmw

command: node app.js

path: .

domain: mybluemix.net

instances: 1

memory: 128M

2. In directory 'server' create two files .gitignore and .cfignore with the string 'node_modules' in there. This will make sure all your node_modules don't get uploaded which takes forever and isn't needed.

3. From 'server' Execute the 4 commands in Ray's post above beginning with 'cd your_new_directory' and ending with cf push - this will upload and restart your instance on BlueMix

4. After that you should be able to go to http://whateveryouappiscall... and see the web browser version working.

5. To get the Ionic sample to communicate with BlueMix rather than localhost just change http://localhost:3000/uploadpic to http://whateveryouappiscall...

6. This will work now for iOS but for Android I found I had to add logic to make sure a jpg file extension is added otherwise a 502 Bad Gateway is returned from BlueMix:

//So now we upload it

var options = new FileUploadOptions();

options.fileKey="image";

options.fileName=fileUri.split('/').pop();

if(options.fileName.split('.').length == 1) {

options.fileName += '.jpg';

}

These are some *damn* good tips, thank you. I'm still learning Bluemix, so stuff like the manifest.yml is new to me. Your second tip is especially useful as I've noticed in the past that updates seem a bit slow - that should help.

For tip 5, I'd probably suggest using Angular constants so it is a bit easier to tweak. In a real app you may have N routes to call.

Really, really, *really* thank you.

Thanks Ray! I'm very new to it too so was just pleased to get something working. Slowly it begins to make sense. I'd like to understand how to manage users and passwords with BlueMix's MAS. I've seen lots of howtos for Parse and Firebase on this but nothing for BlueMix. Perhaps a future Blog posting?

I assume you mean for users of the mobile app, not users like for services being called from Node?

Yep, how to manage and protect mobile app users' data. I've stored stuff using BlueMix's Mobile Data but it's open to anyone with the right key and secret rather than specific to the user.

Dude, a *lot* of the CouchDB/PouchDB//Cloudant demos I see are like that - they assume one set of data - which is weird as that is totally not real world. If you look at my blog series on SauceDB, I kind of address this. I have third party oauth on the client and Node.js using it to verify a valid login and then I store that ID with data. See if that series helps.

Really good tutorial cleared lot of concepts thx. The Watson API specifications have changed.

var VisualRecognitionV3 = require('watson-developer-cloud/visual-recognition/v3');

var visual_recognition = new VisualRecognitionV3({

api_key:"<>",

version_date: '<>'

});

....................

visual_recognition.classify(params, function(err, res) {

if (err)

console.log(err);

else {

console.log(JSON.stringify(res, null, 2));

var results = [];

for(var i=0;i<res.images[0].classifiers[0].classes.length;i++) {="" results.push(res.images[0].classifiers[0].classes[i].class);="" }="">